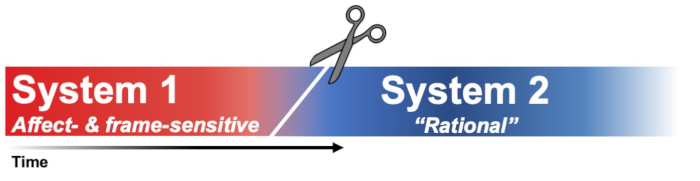

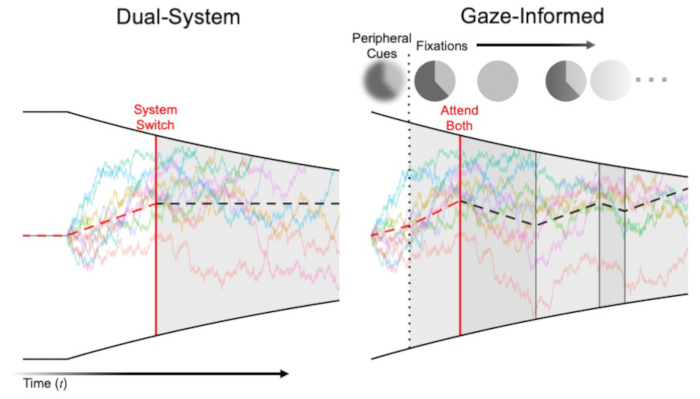

What causes the framing effect? One explanation, based in dual-system models of behavior, is that fast emotional responses (System 1) to perceived gains or losses pull us in opposite directions unless we're able to reassert our rationality by engaging in slower, deliberative thinking (System 2). A way to test this prediction is to have people make decisions under strict time limits - and, in line with the dual-system account, framing effects get larger (e.g., Guo et al., 2017)! The idea here is that time pressure cuts off the process before System 2 can correct System 1:

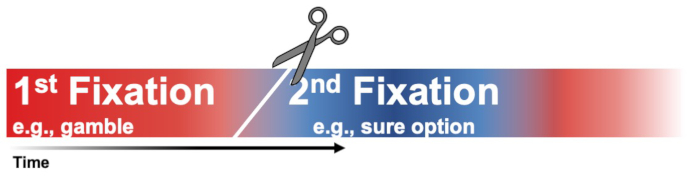

But what if time pressure is truncating a different sort of process? We hypothesized time pressure might cut visual information search short and that this can end up looking a lot like what's predicted by some (though not all) dual-system models. That is, instead of interrupting shifts between modes of thought, time pressure is interrupting shifts in attention:

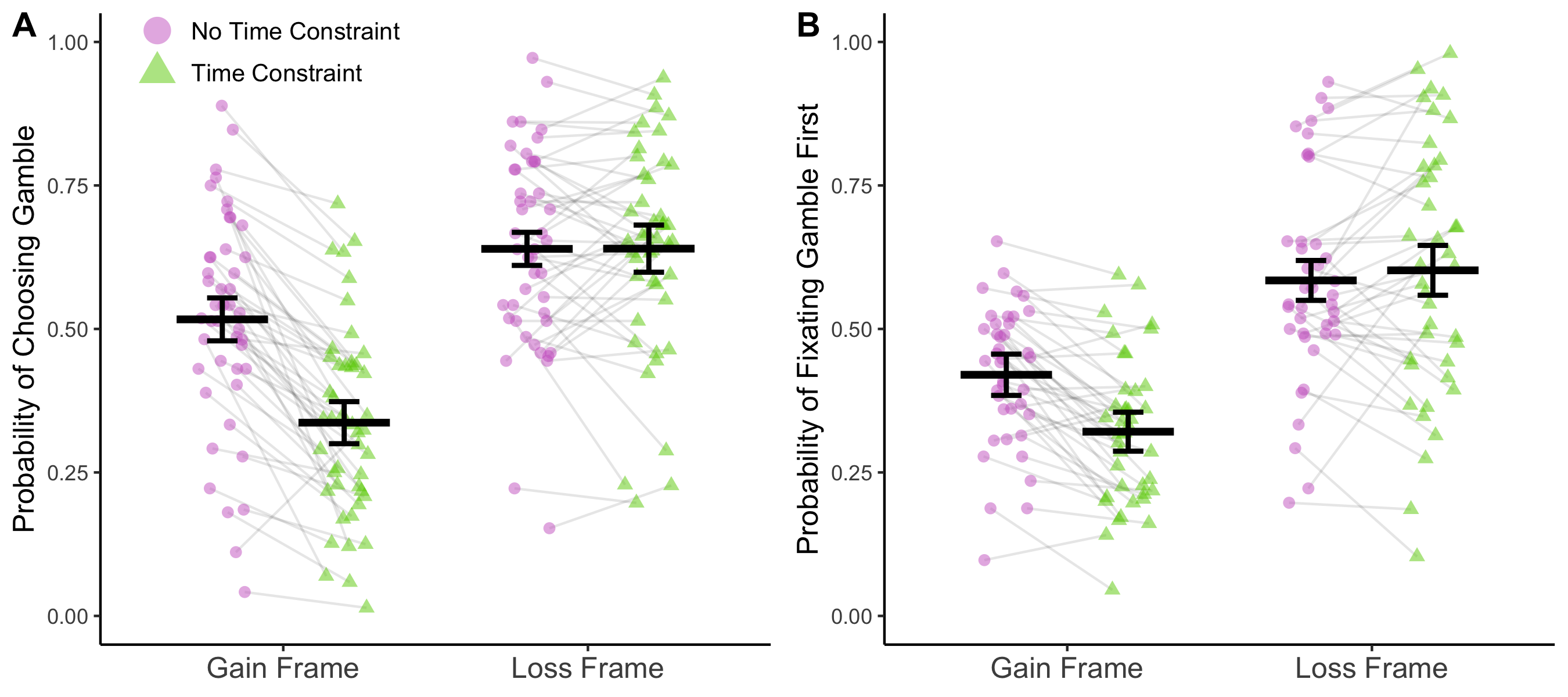

This is exactly what we've found. Recording people's eye movements while they complete the same task as in Guo et al., 2017, we found that under time pressure:

- People often chose without looking at both options

- People were unlikely to choose something they hadn't looked at

- People's early attention was biased in a way that mirrored the effects of time pressure on choice

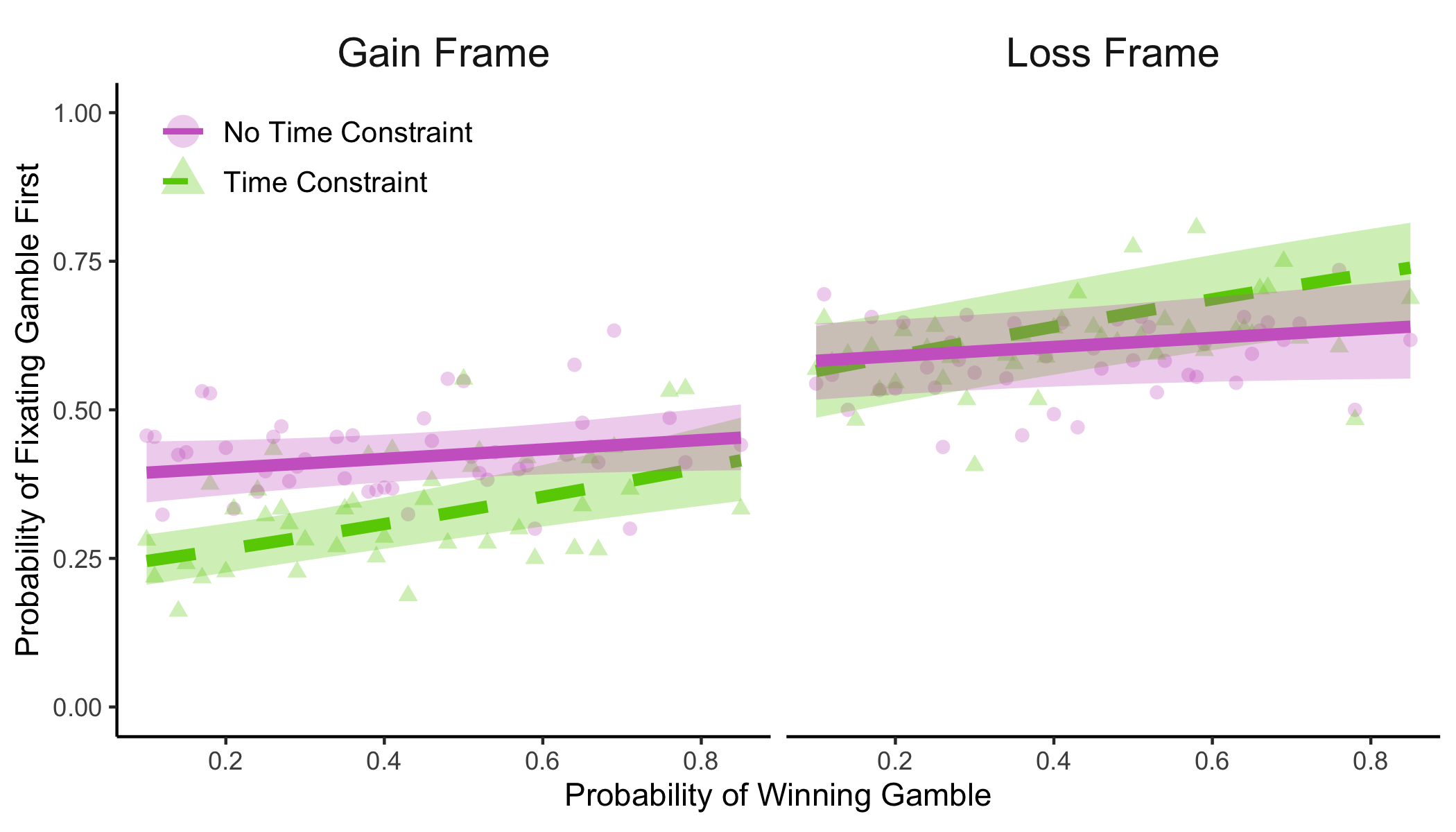

The above plot on the right shows the very first fixation to an option. This raises the question, how do people know where to look first? We thought they might be able to use their peripheral vision to detect the colors of the pie charts that were used to display the option probabilities. This predicts that fixation should be influenced by gamble probability, even though people haven't looked at the gamble yet. And that's what we found:

This work is published in Psychological Science.

Next, we wanted to try some formal tests to see just how much attentional patterns can account for framing effects both with and without time pressure. To do this, we compared Diederich & Trueblood's (2018) elegant dual-system model of framing effects with an adapted version of a gaze-informed attentional drift diffusion model (Krajbich et al., 2010; Teoh et al., 2020).

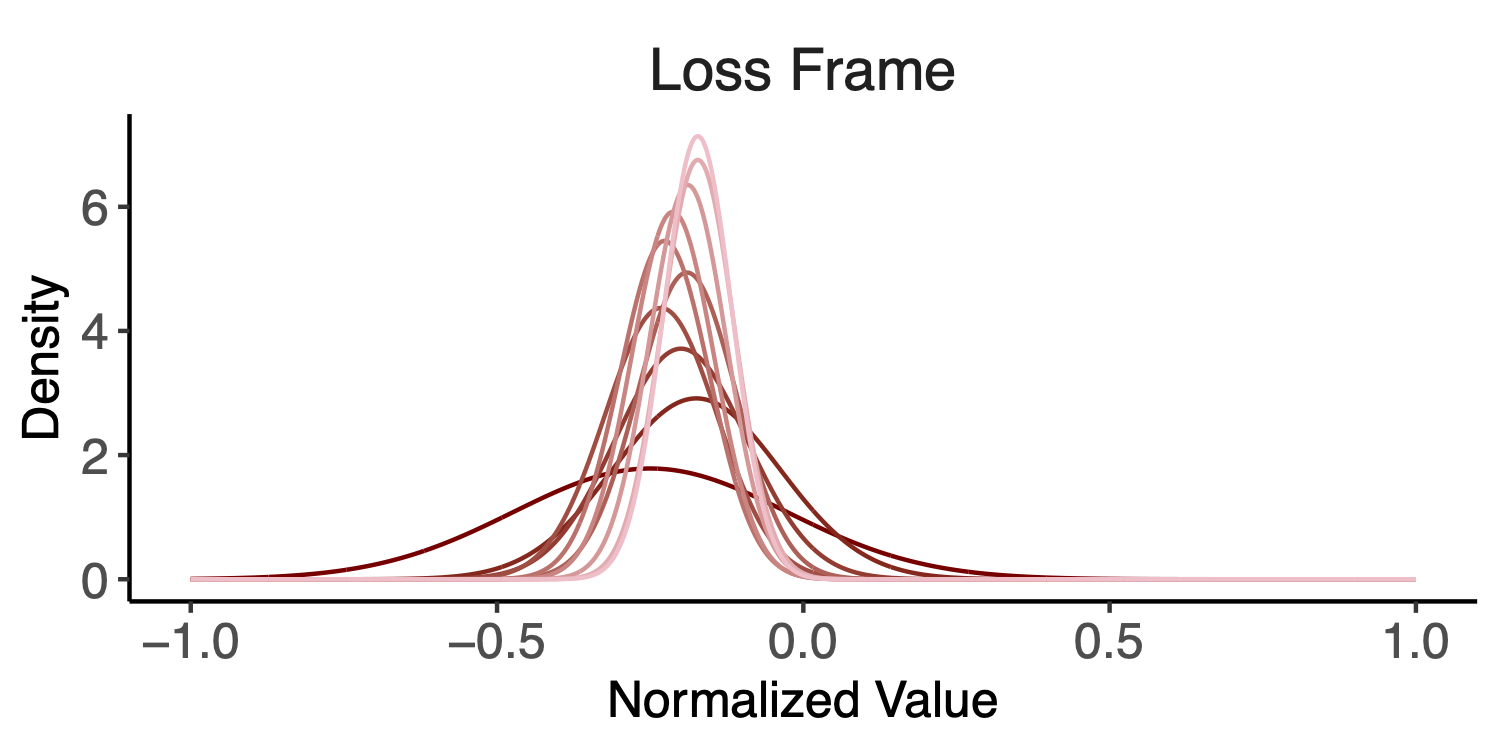

Both of these models depict choice as a gradual accumulation of noisy evidence until a threshold is met. In the dual-system model, the evidence that's accumulated at first is shaped by prospect theory parameters (System 1) until it later switches to accumulating evidence that is determined by expected value (System 2). Meanwhile, in the gaze-informed model, attention to an option amplifies the influence of its value in the evidence accumulation process. Critically, take a look at how evidence accumulation early on in the process compares:

The red segment of the evidence accumulation process represents System 1 in the dual-system model and time when only one option has been attended in the gaze-informed model. Note the similar effect System 1 and early attention can have on evidence early on in the choice process.

Comparing model fits, we found that the gaze-informed model does a better job than the dual-system model at explaining most people's choices and RTs. Furthermore, using the parameters from the dual-system model, we found people with stronger early attention biases tended to spend more time in System 1 before switching to System 2. This provides further support to the idea that dual-system models might sometimes be picking up on attentional dynamics. A preprint of this work will be available soon.

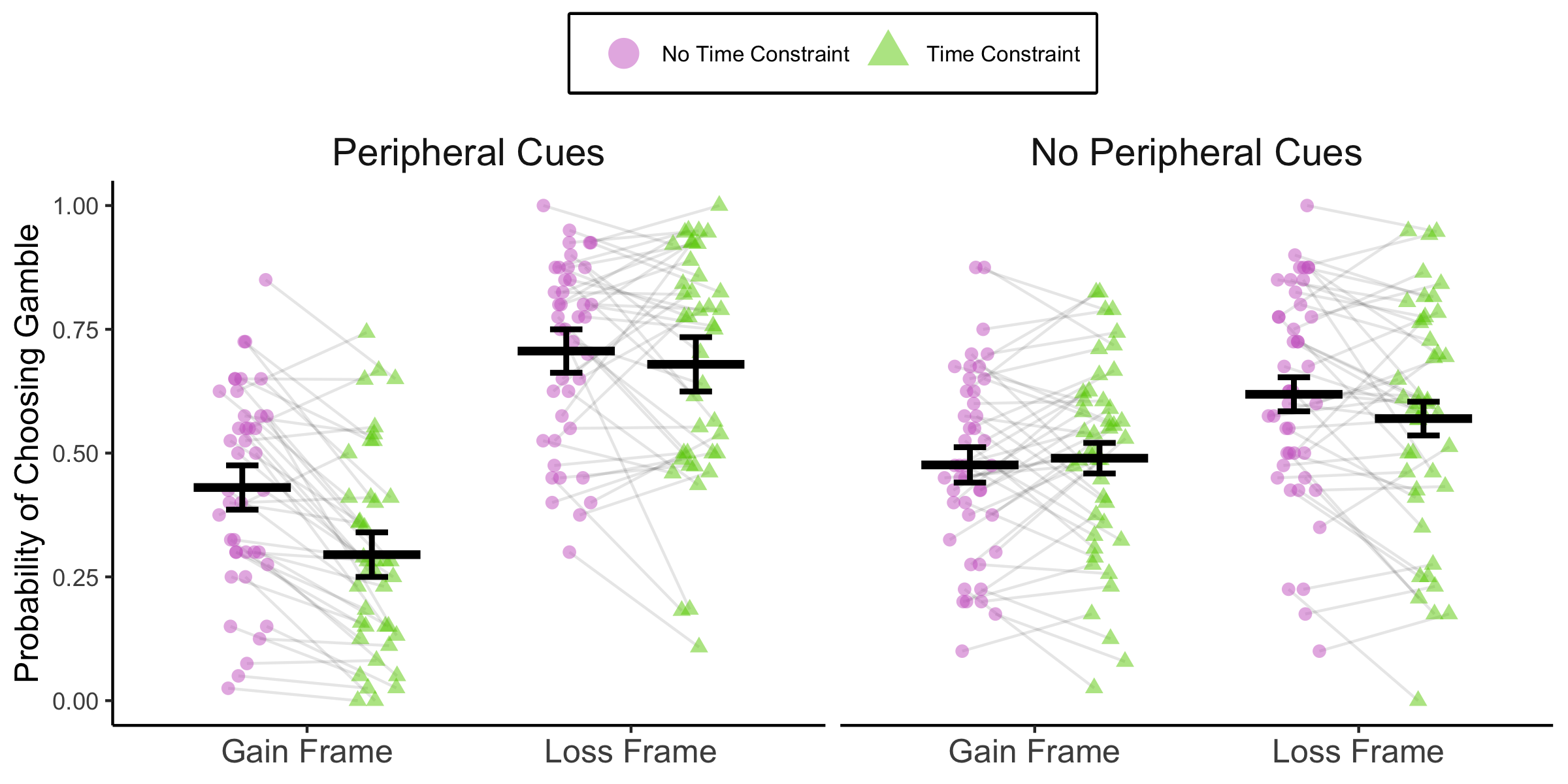

But how much does early attention really matter? To test this, we've run a few experiments where we simply remove the different pie chart colors that people had used as peripheral cues. When we do this, the overall effect of time pressure on framing effects goes away. In fact, the framing effect is now smaller under time pressure!

Preprint of this work is also coming soon.

We think these results clearly show that visual attention is critical for the producing the framing effect in this particular task. Furthermore, our gaze-informed model shows that attentional patterns can account for the framing effects observed under no time pressure as well!

But none of these results answer the question: What's driving attention?

To explain attention, I've been working on a new computational model that I call the attention-guided Bayesian-learning model of framing effects. In a nutshell, the idea is that at each moment when making a decision our participants can opt for one of the following:

- Terminate information search and choose the option they currently think is best

- Gather more information about the options

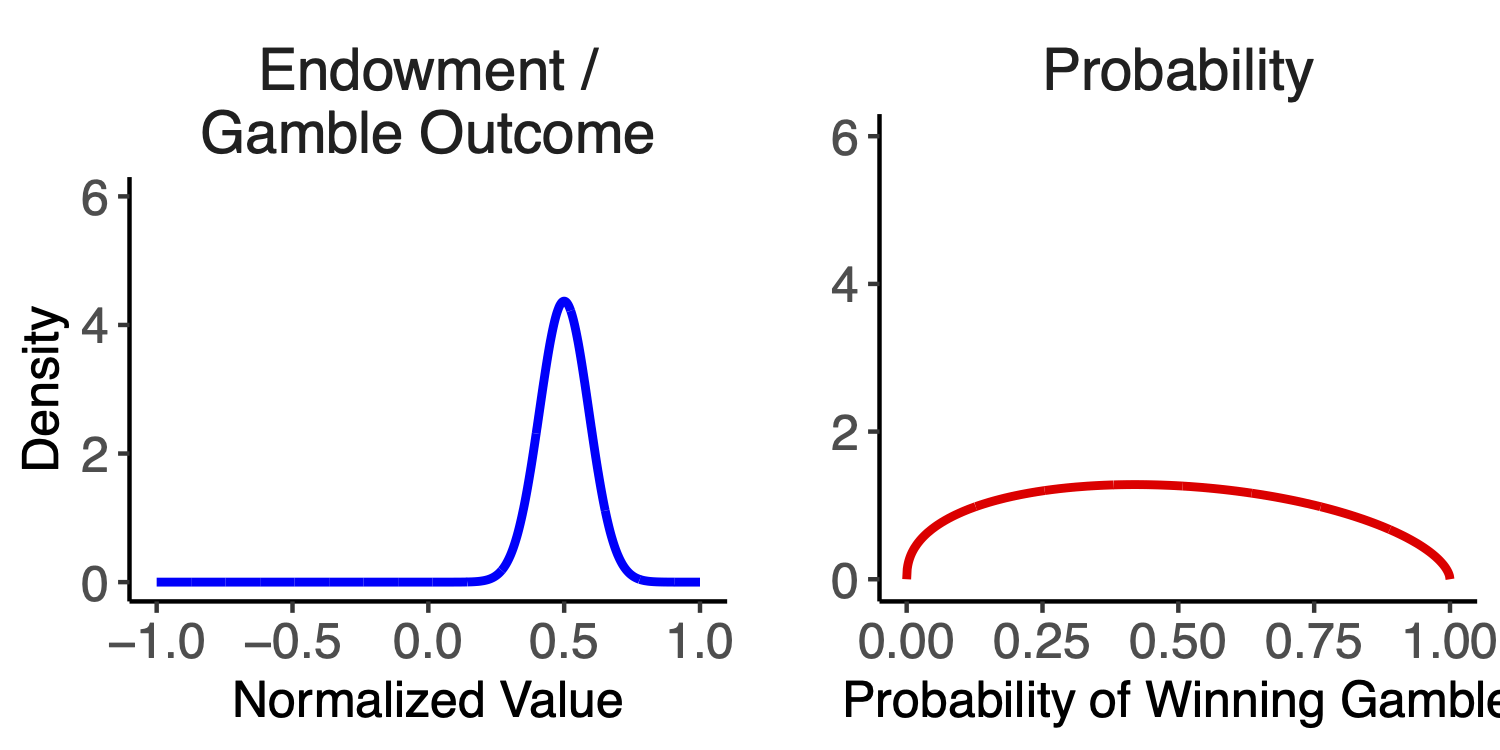

Gathering information means refining their beliefs about each option's attributes. For the gamble, participants can learn about its outcomes and its probability:

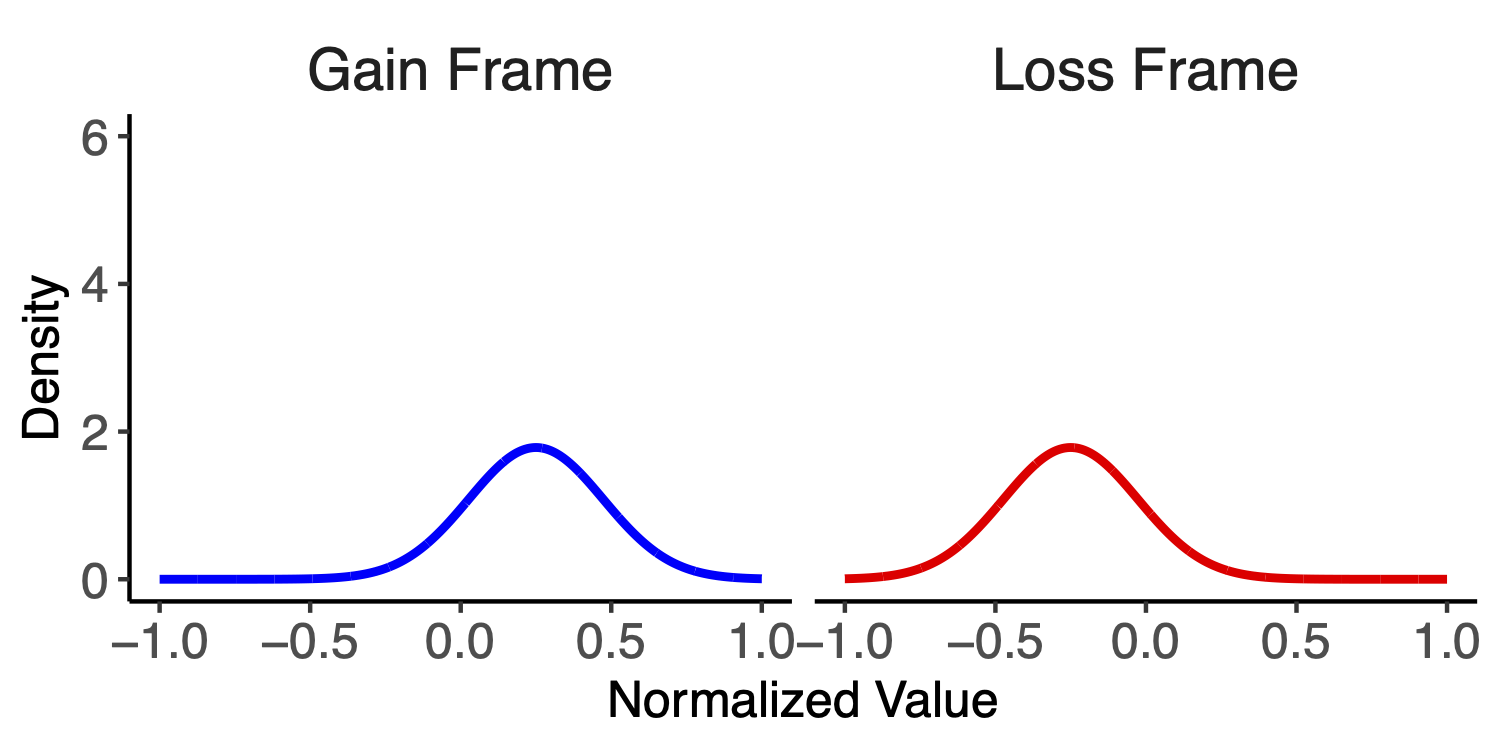

Meanwhile, the sure option has attributes for the seen and unseen framings (we assume they know the probability of 1.0 with confidence):

How do they update these beliefs? According to the model, by drawing noisy perceptual samples while looking at the visual information. They incorporate these samples to update their beliefs in a Bayesian sense:

Importantly, if you want to learn, you should inspect the thing you're the most unsure about. And that's on way attention works in our model. A decision-maker's attention is drawn to the option she knows the least about. Additionally, attention can be drawn by beliefs about value.

My model has another feature worth mentioning: people use mental conversions to sample the unseen framing of the sure option.

We're working out final details in this model but so far it's succeeding in accounting for both attention and choice in the framing effects task. Stay tuned!